On this page

Your analytics

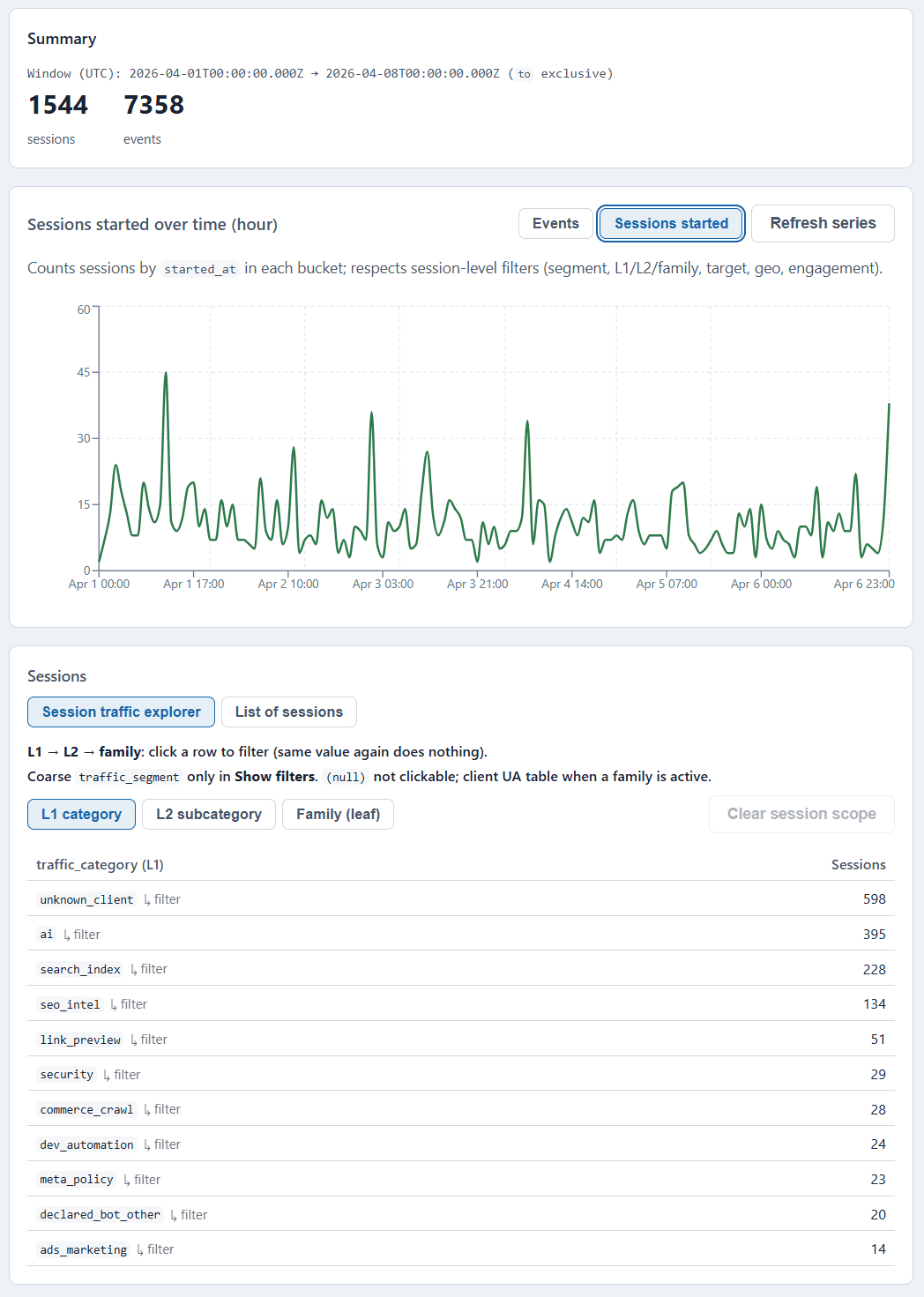

sees 60% of traffic.

The rest is invisible.

GA, Plausible, Fathom — they all miss AI crawlers, SEO bots, link preview fetchers, and automated scrapers. Because none of them run JavaScript.

Logwick reads your raw server logs and classifies every single request: real users, AI agents, social previews, SEO tools, security probes, and more.

No tracking snippet. No SaaS account. No data leaving your server.

Open-source · AGPL-3.0 · Node.js 20+ · SQLite on disk · Zero external calls